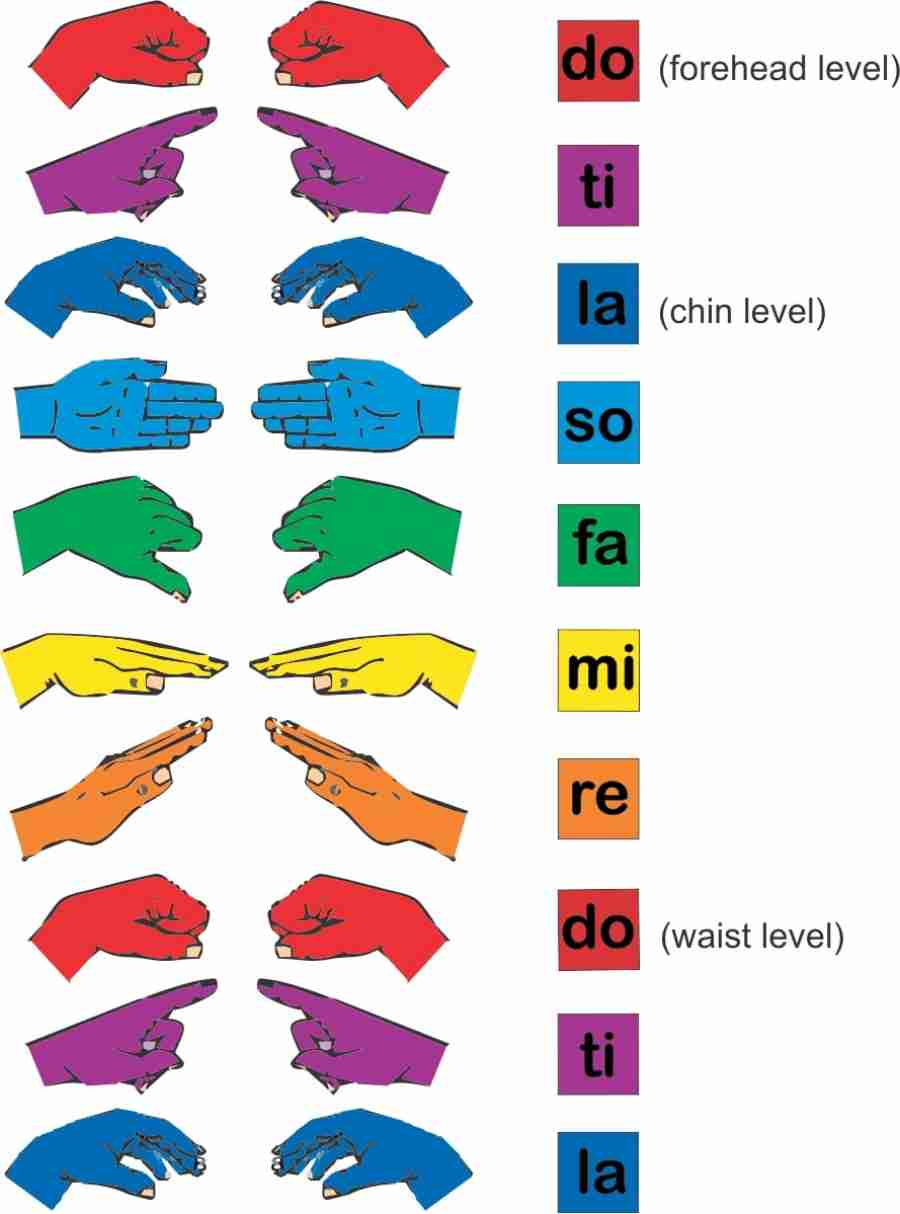

Students look at a new melody and sign the pitches while singing inside. Hand signs give a tool for students to use while they hear inside, and teachers can use it to assess how well students are comprehending in an easy visual way. Inner hearing is when you can internalize the pitches you’re reading or thinking. Many music teachers also use hand signs to help develop inner hearing or audiation. The key to this purpose lay in the height difference you move to show each interval and the sign shape for each pitch. Teachers use these to help the brain build new roads to singing and reading skills. Hand signs provide a kinesthetic way to show the aural skills. It can be hard to think about subjecting our students to the same thing.Ĭonnecting an aural skill with different methods of learning can make developing this skill a lot easier (and more fun!). Many of us have horror stories from our sight singing and ear training classes. Singing and hearing pitches can be a difficult thing to do and teach. #1 Provides Movement Representing Pitch Intervals In this section, we’ll look at some of the main reasons teachers use solfege hand signs. High Do = Just above head level 5 Reasons To Use Solfege Hand Signs.These hand signs are also used at different height levels to show the relative pitch intervals between the solfege pitches. Image From The Kodaly Method on Slideshare Often, these are associated with the solfege pitches: do, re, mi, fa, sol, la, and ti. Solfege hand signs, or more accurately Curwen hand signs, are hand movements which represent the different pitches in a scale. Conclusion What Are Solfege Hand Signs?.#3 Gives A Different Avenue For Instruction.#1 Provides Movement Representing Pitch Intervals.Wadhawan, A., Kumar, P.: Deep learning-based sign language recognition system for static signs. In: Proceedings of the 2001 Workshop on Perceptive User Interfaces, pp. Von Hardenberg, C., Bérard, F.: Bare-hand human-computer interaction. In: 2010 18th Euromicro Conference on Parallel, Distributed and Network-based Processing, pp. Strigl, D., Kofler, K., Podlipnig, S.: Performance and scalability of GPU-based convolutional neural networks. Steeves, C.: The effect of Curwen-Kodaly hand signs on pitch and interval discrimination within a Kodaly curricular framework. Srivastava, N., Hinton, G., Krizhevsky, A., Sutskever, I., Salakhutdinov, R.: Dropout: a simple way to prevent neural networks from overfitting. First International Workshop on Egocentric Perception, Interaction, and \(\ldots \) (2016) In: First International Workshop on Egocentric Perception, Interaction, and Computing (EPIC 2016). Sörös, G., Giger, J., Song, J.: Solfège hand sign recognition with smart glasses. Schramm, R., de Souza Nunes, H., Jung, C.R.: Automatic solfège assessment. Schramm, R., Nunes, H.D.S., Jung, C.R.: Audiovisual tool for solfège assessment. In: 2018 Conference on Signal Processing And Communication Engineering Systems (SPACES), pp. Rao, G.A., Syamala, K., Kishore, P., Sastry, A.: Deep convolutional neural networks for sign language recognition. In: Lai, S.-H., Lepetit, V., Nishino, K., Sato, Y. Park, S., Kwak, N.: Analysis on the dropout effect in convolutional neural networks.

McClung, A.C.: Sight-singing scores of high school choristers with extensive training in movable solfège syllables and curwen hand signs. Mäki-Patola, T., Hämäläinen, P.: Latency tolerance for gesture controlled continuous sound instrument without tactile feedback. Khan, A., Sohail, A., Zahoora, U., Qureshi, A.S.: A survey of the recent architectures of deep convolutional neural networks. In: 2017 6th International Conference on Reliability, Infocom Technologies and Optimization (Trends and Future Directions)(ICRITO), pp. Kalra, S., Jain, S., Agarwal, A.: Fixed do solfège based object detection and positional analysis for the visually impaired. Jørgensen, A., Fagertun, J., Moeslund, T.B., et al.: Classify broiler viscera using an iterative approach on noisy labeled training data. Islam, M.M., Islam, M.R., Islam, M.S.: An efficient human computer interaction through hand gesture using deep convolutional neural network. In: 2015 IEEE International Conference on Multimedia and Expo (ICME), pp.

Huang, J., Zhou, W., Li, H., Li, W.: Sign language recognition using 3D convolutional neural networks. 179, 541–549 (2021)Ĭampos, L.S., Salvadeo, D.H.P.: Multi-label classification of panoramic radiographic images using a convolutional neural network. Īrdiansyah, A., Hitoyoshi, B., Halim, M., Hanafiah, N., Wibisurya, A.: Systematic literature review: American sign language translator. Agbo-Ajala, O., Viriri, S., et al.: Age group and gender classification of unconstrained faces.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed